Empirical to First Principle Models

Computing tools currently used in nuclear industry and regulatory practice are based primarily on empirical math models to approximate, or fit, existing experimental data. Many have a pedigree reaching back to the 1970s and 1980s and were designed to support decision-making and evaluate everything from behavior of individual fuel pellets to severe accident scenarios for an entire power plant.

Programs like SAPHIRE, FRAPCON, RELAP5, and MELCOR are just a few examples of current computing tools used in the regulation and operation of nuclear power plants. While these conventional tools have been updated for today’s technology, they still suffer from limitations of their original design intent; to approximate or fit existing data by means of empirical math models.

A key distinction between these conventional tools and those being developed in NEAMS is that NEAMS tools are based on first-principles physics models. Instead of using experimental data to produce a mathematical model that approximates or fits it, NEAMS is investing in research and development to study the underlying physical phenomena governing behaviors of interest and then developing physics models to describe them. Although the distinction seems subtle, it is in fact critical. The development of the Ideal Gas Law is a good example to highlight this distinction.

In-depth article: The Ideal Gas Law

Taking Reliable Methodologies to a New Level

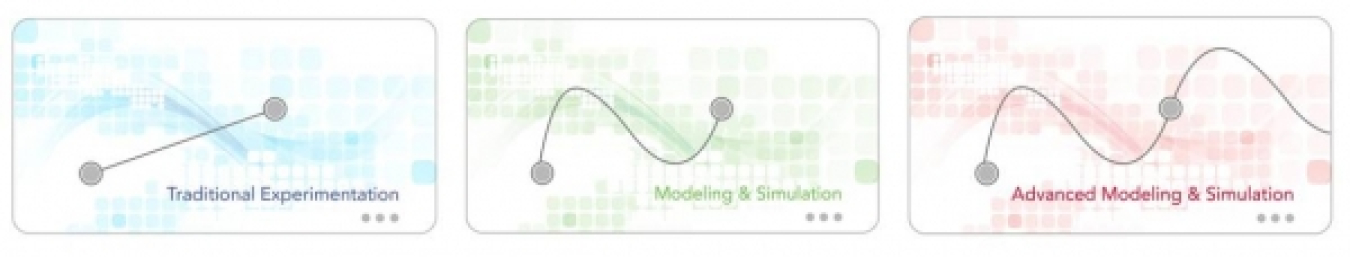

When insights about the behavior of systems are sought beyond the regimes in which they have been tested, empirical math models can provide misleading descriptions. That means as designers seek to explore innovations with new fuels or reactor types, they face difficulties predicting behaviors with a high level of certainty. A visual comparison between traditional experimentation, existing modeling and simulation methods and advanced modeling and simulation is presented below.

Traditional experimentation (the blue image) starts with the collection of data. If these data points were plotted on an x and y graph, a common way to assume what measurements would be collected between the two points would be to assume an average or linear relationship between them. But what if the actual behavior of the system between the two points was not linear (green image)? Predicting the measurements that would be obtained with averaging would be poor. Experimental data “fitting” informed by theory allows researchers to draw more robust conclusions about what occurs between them by using statistical methods and/or developing empirically derived relationships such as the ideal gas law. Modeling and simulation extends this curve fitting methodology by harnessing computers to interpolate or predict behaviors between these measurements. Advanced modeling and simulation (red image) seeks to extrapolate or predict behaviors outside and beyond observed data by using fundamental physical laws to more accurately describe the system from the very start. Of course to be useful, the advanced models will need to be validated with experiments and their uncertainties of prediction quantified to gain confidence in their ability to predict behaviors.

Simulating Real-World Physics

How do we know if we can trust a simulation? As a first check, we often compare data from a simulation with data from real experiments. If a simulation fails to compare favorably with experiment, is the simulation completely untrustworthy? Or, was the simulation accurate and the experiment, or its interpretation, somehow flawed? If a simulation and experiment do compare favorably, can we trust the simulation in all cases? Did the simulation fortuitously produce a comparable answer due to compensating errors in the models? Or can we trust it only when it is used for conditions very similar to the experiment? Is it possible that both the simulation and experiment are flawed in the same ways causing both to compare favorably to each other but neither reflecting the truth of the real world? These and many related questions strike at the heart of what it means to develop modeling and simulation capabilities that are predictive.

Verification, Validation and Uncertainty Quantification

Predictive simulation involves not only demonstrating that a simulation compares favorably with a wide variety of experiments, but that differences between simulation and experiment can be meaningfully quantified and bounded; that we can demonstrate the simulation is indeed representing aspects of real-world phenomena; and that we can specify the range of conditions under which we can expect the simulation to faithfully represent the real-world while identifying the bounds where they do not.

For this reason, while some researchers in NEAMS are advancing models for nuclear fuels and reactors, other computational scientists, mathematicians and statisticians are developing tools that test these models for accuracy, validity and applicability and limitations.

When we cannot trust simulation results as much as we might wish to, we mitigate our lack of confidence by increasing the safety margins of the real life products we actually build. If a simulation indicates a 4-foot-thick concrete wall is sufficient to contain a reactor core, we may double or triple that at significantly increased costs, to minimize risk. As a result, researchers estimate their results with a range that protects their designs against estimated uncertainties. Predictive simulations can justify reducing overly conservative margins by providing accurate insights about the risks that are otherwise difficult or impossible to assess.