To solve some of the world’s biggest scientific problems, you need some of the world’s biggest computers. The teams at the Department of Energy’s user

August 16, 2017

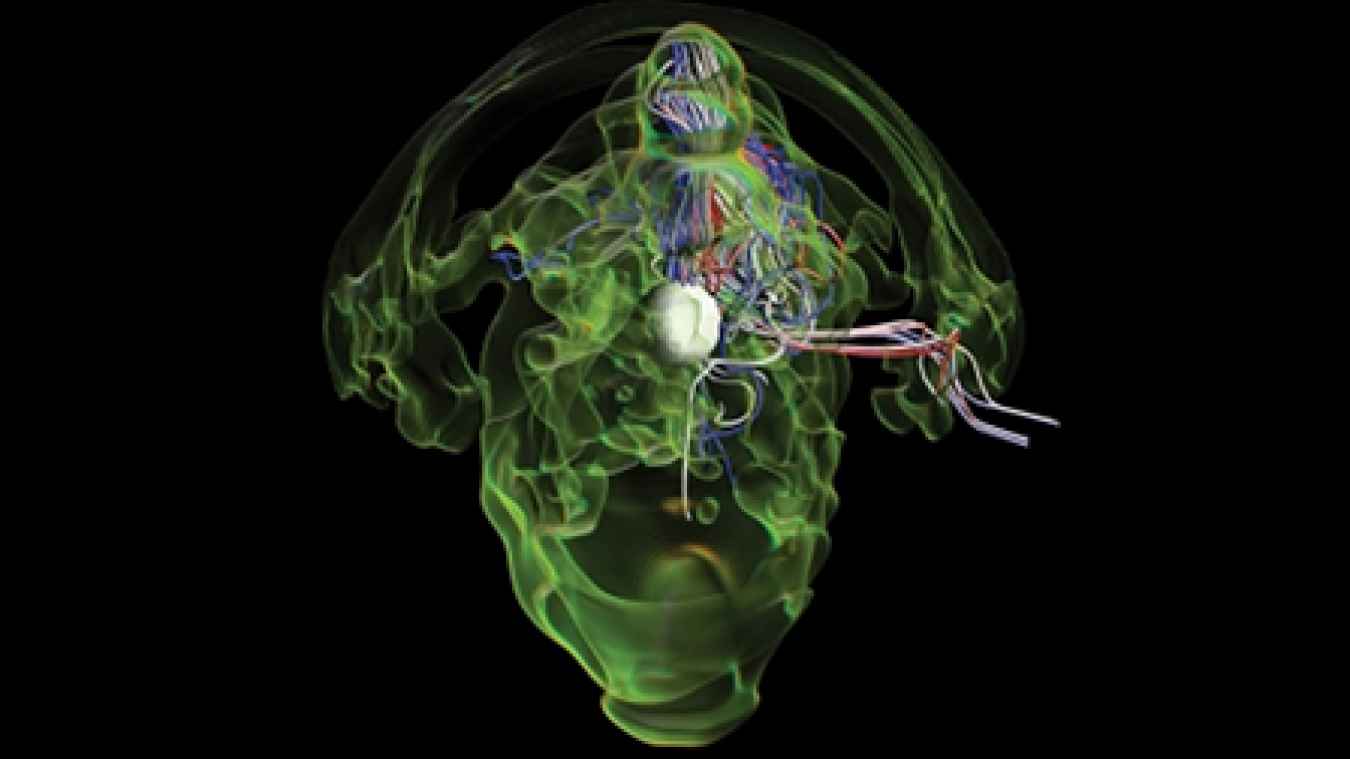

Scientists used Titan to model a supernova explosion from the collapsing core of a super-giant star.

As you walk out of an electronics store clutching the cardboard box with your new computer, you dream of all of the cool things you'll be able do, from writing your screenplay to slaying monsters in a multiplayer game. Managers of the Department of Energy (DOE) Office of Science's supercomputers have similar visions, whether crunching particle physics data or modeling combustion inside of engines.

But while you can immediately plug in your laptop and boot up your computer, things aren't so simple for the three DOE Office of Science supercomputing user facilities. These are the National Energy Research Scientific Computing Center (NERSC) at Lawrence Berkeley National Laboratory in California, the Oak Ridge Leadership Computing Facility (OLCF) in Tennessee, and the Argonne Leadership Computing Facility (ALCF) in Illinois. These supercomputers are often the first of their kind: serial number zero. They aren't even delivered intact – the vendors assemble them at the facilities. Planning for and installing a new one takes years. But the challenge of setting up and keeping these computers running is well worth it. After all, these computers make it possible for researchers to tackle some of DOE's and the nation's biggest problems.

Why Supercomputers?

Supercomputers solve two primary scientific challenges: analyzing huge amounts of data and modeling immensely complex systems.

Many of the Office of Science's user facilities coming online in the near future will produce more than 1 terabyte of data every second. That's enough to fill almost 13,000 DVDs a minute. Computers need to store and sort through this data to find the few pieces relevant to scientists' research questions. Supercomputers are much more efficient at completing these tasks than conventional computers are. The Office of Science's supercomputers take only a single day to run calculations that it would take a regular computer 20 years to do.

Modeling complex systems, such as ocean circulation, can be even more challenging. Because all the system's parts influence each other, it's impossible to fully break them apart. Scientists also want to minimize assumptions, work from first principles, and use real-world data. This further adds to the models' complexity. Even if a conventional computer can run a simulation once, researchers often need to run the same simulation several times with different parameters.

Without a supercomputer, "You just might never have enough time to solve the problem," said Katie Antypas, NERSC's Division Deputy and Data Department Head.

Visioning a Supercomputer

The user facility's staff members often start planning a new supercomputer before they finish setting up the last one. The technology changes so quickly that it's more cost effective and energy efficient to build a new computer than to continue running the old one. The ALCF staff began planning in 2008 for Mira, its largest supercomputer. It launched in 2013.

The user facilities' teams start the process by asking scientists what capabilities the computers should have that would help future research. With requirements in hand, they put out a request for proposals to manufacturers.

Just envisioning these future computers is challenging.

For example, planning their current supercomputer, Titan, Buddy Bland, the OLCF project director said, "It was not obvious how we were going to get the kind of performance increase our users said they needed using the standard way we had been doing it."

For more than a year, OLCF worked with their users and computing vendor Cray to develop a system and transition plan. When OLCF launched Titan in 2012, it combined CPUs (central processing units) like those in regular computers with GPUs (graphics processing units), which are usually used in gaming consoles. The GPUs use less power and can handle a greater number of instructions at one time than CPUs. As a result, Titan runs 10 times faster and is five times more energy efficient than OLCF's previous supercomputer.

Adapting Hardware and Software

The user facilities work to make sure everything is ready long before the manufacturer delivers the computer.

Preparing the physical site alone can take up to two years. For its latest supercomputer, Cori, NERSC installed new piping under the floor to connect the cabinets and cooling system. OLCF has already added substations and transformers to its power plant to accommodate Summit, its new supercomputer that will start arriving in 2017.

Adapting software is even more demanding. Programs written for other computers – even other supercomputers – may not run well on the new ones. For example, Titan's GPUs use different programming techniques than traditional CPU-based computer systems. Even if the programs will run, researchers need to adapt them to take advantage of the supercomputers' new, powerful features.

Theta, Argonne's most recent supercomputer, launched in July 2017. It will be a stepping-stone to the facility's next leadership-class supercomputer.

One of the biggest challenges is effectively using the supercomputers' hundreds of thousands of processors. Programs must break problems into smaller pieces and distribute them properly across the units. They also need to ensure the parts communicate correctly and the messages don't conflict.

Other issues include taking advantage of the computers' massive amounts of memory, designing programs to manage failures, and reworking foundational software libraries.

To stress-test the computers and smooth the way for future research, each facility has an early user program. In exchange for dealing with the new computer's quirks, participants get special access as well as workshops and hands-on help. For this advance peek, user facility managers choose projects that reflect major research areas, including astrophysics, materials sciences, seismology, fusion, earth systems, and genomics.

To prepare for Cori, NERSC held a series of "dungeon sessions" that brought together early users with engineers from manufacturers Intel and Cray. During these three-day workshops, often held in windowless rooms, researchers improved their code. Afterwards, some algorithms within programs ran 10 times faster than they had before.

"What's so valuable is the knowledge and strategies not only to fix the bottlenecks we discovered when we were there, but other [problems] that we find as we transfer the program to Cori," said Brian Friesen from NERSC. He revised a cosmology simulation during a dungeon session. "It definitely takes a lot of work, but the payoff can be substantial."

Troubleshooting Time

It all comes together when the supercomputer is finally delivered. But even then the computer isn't quite ready for action.

When it arrives, the team assesses the computer to ensure it fulfills the user facility's needs. First, they run simple tests to make sure that it meets all of the performance requirements. Then, after loading it up with the most demanding programs possible, the team runs it for weeks on end.

"There's a lot of things that can go wrong, from the very mundane to the very esoteric," said Susan Coghlan, ALCF project director.

When ALCF launched Mira, they found the water used to cool the computer wasn't pure enough. Particles and bacteria were interacting with the pipes, giving a new meaning to dealing with computer bugs. With OLCF's Titan, the GPUs got so hot that they expanded and cracked the solder attaching them to the motherboard.

More common issues include glitches in the operating system and problems with how different parts of the computer pass messages to each other. As challenges arise, the facilities staff work with the manufacturers to fix them.

"Scaling these applications up is heroic. It's horrific and heroic," said Jeff Nichols, Oak Ridge National Laboratory's associate laboratory director for computing and computational sciences. "It's so exhausting that you usually just go home and sleep."

The early user program participants then get several months of exclusive access.

"I always find it very exciting when the Early Science users get on," said Coghlan, referring to ALCF's Early Science Program. "That means it's a real machine for science." After that, the facility opens the computers up to requests from the broader scientific community.

The lessons learned from these machines will extend to launching the Office of Science's next high performance computing challenge: exascale computers. Exascale computers, which will be at least 50 times faster than today's most powerful computers, will improve the nation's national security, economic competitiveness, and scientific discovery. Although exascale machines aren't expected until 2021, the facilities managers are already dreaming about what they'll be able to do – and planning how to make it happen.

"Our desire is to deliver leadership-class computers," said Nichols. "You have to be very strategic and visionary in terms of generating what that next-generation supercomputer can be."

The Office of Science is the single largest supporter of basic energy research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information please visit https://science.energy.gov.

Shannon Brescher Shea

Shannon Brescher Shea (shannon.shea@science.doe.gov) is the social media manager and senior writer/editor in the Office of Science’s Office of Communications and Public Affairs. She writes and curates content for the Office of Science’s Twitter and LinkedIn accounts as well as contributes to the Department of Energy’s overall social media accounts. In addition, she writes and edits feature stories covering the Office of Science’s discovery research and manages the Science Public Outreach Community (SPOC). Previously, she was a communications specialist in the Vehicle Technologies Office in the Office of Energy Efficiency and Renewable Energy. She began at the Energy Department in 2008 as a Presidential Management Fellow. In her free time, she enjoys bicycling, gardening, writing, volunteering, and parenting two awesome kids.