A team led by the University of Colorado-Boulder has developed a combined cooling and airflow simulation and optimization toolkit that has already enabled significant energy savings in two data centers.

March 21, 2021Data centers, dedicated computing and digital storage facilities, power a significant portion of the modern economy. Large companies operate massive data centers that underpin much of our telecommunications system and the internet. Data centers also represent a significant portion of domestic electricity use, accounting for 1.8 percent in 2016, or slightly less than the average state.

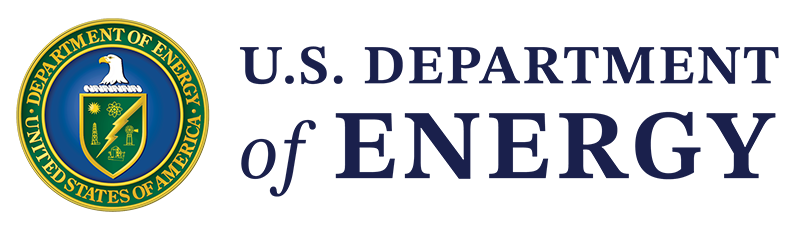

Electricity use is the most significant cost driver in data centers, often eclipsing the cost of capital and labor. This electricity serves two main purposes: powering the computing equipment itself and then cooling it. Computing energy use is minimized using techniques such as virtualization, scheduling, and load-balancing. Cooling energy use is minimized via efficient system design and optimized operation, which includes careful management of airflow within the data center. Computers have different standards for thermal comfort than humans. To a computer, air temperature matters only at its inlet. Because of this, many data centers are arranged in alternating hot-aisle/cold-aisle configurations, in which inlets face both sides of every other aisle and only those aisles are cooled. In the temperature maps below, cold aisles are dark blue whereas hot aisles are warmer green or yellow. The temperature differences between hot and cold aisles create airflows which, when not properly controlled, can result in energy that is wasted on cooling hot aisles.

In order to help data-center operators reduce cooling costs, a team led by the University of Colorado (CU) Boulder, with help from Berkeley Lab and Schneider Electric, developed the Data Center Toolkit: an open-source modeling platform that can be used to optimally manage data-center cooling and airflow simultaneously. The project was funded by a 2016 award from DOE’s Building Technologies Office (BTO). Project leader and CU-Builder Professor Wangda Zuo credits the award for bringing together the right team for the project. “To tackle such a complex problem, we needed a close collaboration among academia, our national labs, and industry,” Zuo said. “The BTO award supported this collaboration.”

In prototyping the Data Center Toolkit, the team worked with two data centers, one in Florida and one in Massachusetts. They developed models of the data centers, calibrated them, and used them to suggest new control strategies and even capital upgrades. These initial pilots greatly exceeded the project’s expectations. The project proposal predicted cooling energy savings of 30 percent, but the team managed to achieve 53 percent savings in the Florida data center and 74 percent in the Massachusetts data center.

The better-than-expected savings at the Massachusetts data center were the result of a $110,000 cooling system retrofit guided by the modeling analysis. “The tool was able to correctly pinpoint system deficiencies and improvements that paralleled the conclusions that the outside engineering firm had come up with,” said David Plamondon, operator of the Massachusetts data center. “The difference, of course, is that the tool was able to give us an overall estimate on what the savings would be as a result of those system improvements.” In both data centers, joint cooling and airflow optimization proved to be essential: optimizing these functions separately achieved energy savings of only 27 percent and 46 percent, respectively.

The Data Center Toolkit makes use of the Modelica Buildings Library for HVAC system simulation, a fast fluid dynamics (FFD) algorithm for airflow modeling, and the GenOpt program for optimization. The Modelica Buildings Library provided an extensible platform for cooling system simulation. The team developed several new models for data center specific cooling equipment and contributed them back to the library. CU-Boulder PI Wangda Zuo originally developed the FFD algorithm for his Ph.D. thesis. Led by Schneider Electric co-PI Jim VanGilder, the team modified the basic FFD approach to bring its accuracy and stability to par with expensive commercial modeling tools while retaining its speed advantage for data center applications.

The core components of the system, including the new Modelica models and the FFD model, are available under open-source licenses. “Schneider Electric and its partners already use the data-center thermal design tool, EcoStream, based on the FFD engine developed during this project,” said Van Gilder. “We now look forward to making this technology broadly available.”