Scientists are developing simulations of the universe and its evolution that take advantage of new, powerful exascale supercomputers.

March 27, 2024

Creating multiple universes to see how they run might be tempting to scientists, but it’s obviously not possible. That is, as long as you need physical universes. If you can make do with virtual ones, there are far more options. Cosmologists supported by the Department of Energy’s Office of Science are developing computer simulations of the universe designed to run on exascale computers. These models are leveraging these supercomputers to provide new insights into our universe’s past and present.

Scientists are developing these simulations to help them explore some of the biggest questions in physics. Cosmologists know that dark matter makes up about 85 percent of the mass in the universe. However, they are still working to understand how it influences the structure of the universe itself. The light from supernovae has helped us understand that the universe is expanding at a faster rate each year. But it’s still a mystery what the “dark energy” is that is causing this accelerated expansion.

Simulations use observational data from telescopes that map the current sky to test various hypotheses about how the universe evolved. The DOE’s Office of Science supports a number of telescopes that take huge amounts of data. The first batch of data from the Dark Energy Spectroscopic Instrument in Arizona has information on two million celestial objects alone. When the Legacy Survey of Space and Time (LSST) camera at Vera C. Rubin Observatory begins collecting data, it will take hundreds of images every night for 10 years.

Cosmologists use this data to create massive maps of the sky that stretch far beyond what we can see on Earth. These “sky surveys” can help us answer questions about dark energy, dark matter, and other cosmic phenomena. The simulations can also help scientists discover the best strategies to observe the sky – where to look, and how often, and how deep.

Beyond analyzing current observations, cosmologists develop simulations that allow them to create many different versions of the same universe. Each version is based on different assumptions about how the universe evolved. By comparing these versions to the maps based on observations, scientists can see which assumptions may be the closest to reality.

The ExaSky project focused on developing these simulations to run on exascale computers. Exascale computers can conduct a billion billion floating point operations (a form of calculation) a second. In comparison, it would take everyone in the world doing math problems for five years straight to complete a similar number of calculations by hand. Frontier at the Oak Ridge Leadership Computing Facility (a DOE Office of Science user facility) was the first exascale computer to come online in May 2022. The next one – Aurora at the Argonne Leadership Computing Facility (another user facility) – will launch soon. These computers have both the performance and memory to handle the massive amounts of computation and data produced by the simulations. In addition to support for the user facilities, the Office of Science also supported ExaSky through the Exascale Computing Project and the Scientific Discovery through Advanced Computing program.

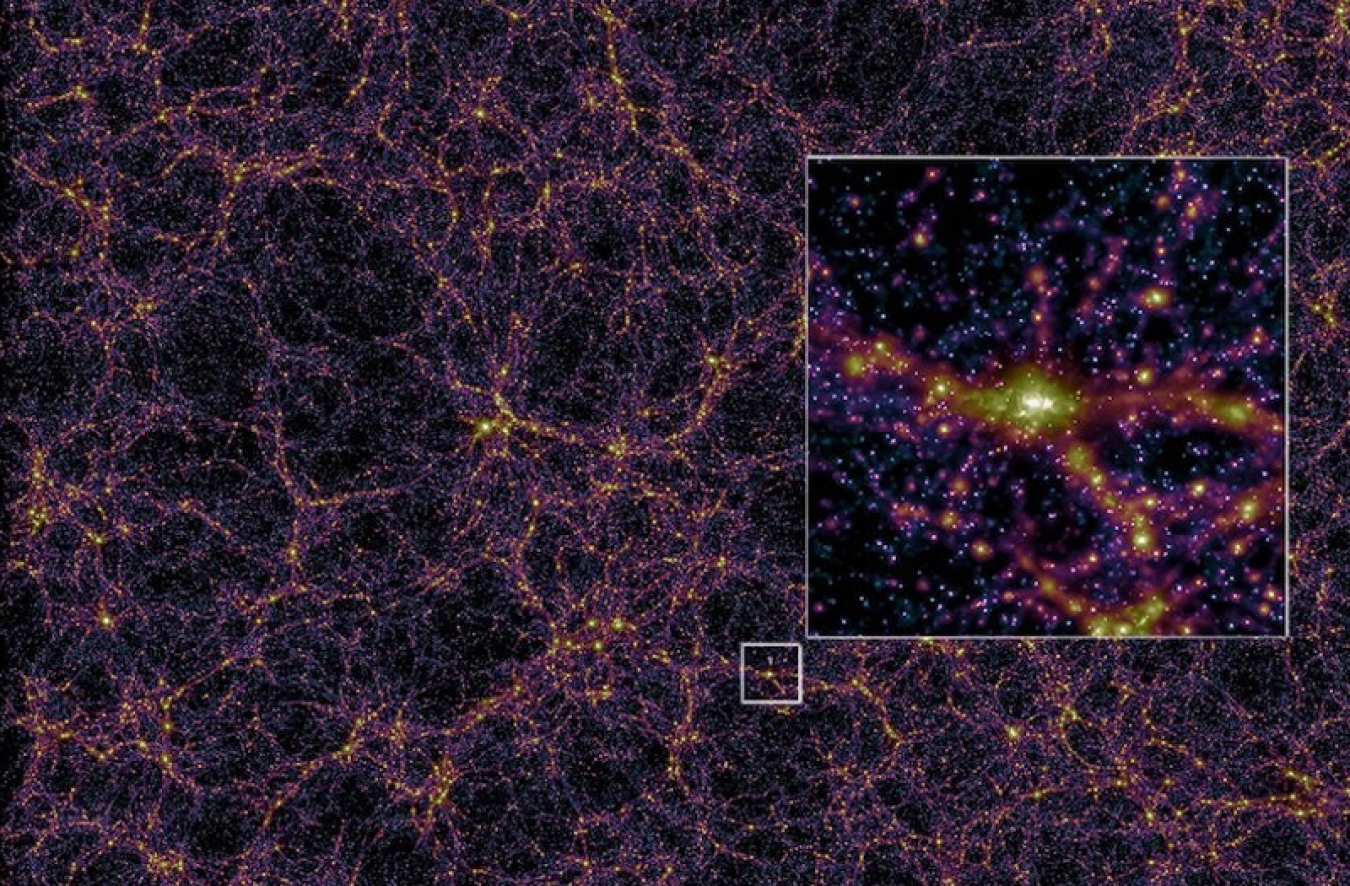

Fortunately, the scientists on ExaSky weren’t starting from scratch. This project drew on two major sets of computer codes that powered previous simulations. The codes simulate how billions of galaxies formed and arranged themselves in what scientists call the cosmic web. The programs include parameters about both the structure and the physics of individual galaxies as well as how they interact with themselves and dark matter via gravity. The scientists on the ExaSky project updated these codes to take full advantage of exascale computers’ capabilities. Exascale computers use graphic processing units (GPUs) – similar to ones used for video game graphics – for processing in addition to central processing units (CPUs) like in a typical laptop. Adjusting to this different form of hardware often requires substantial revisions to the codes.

But running these simulations on exascale computers has major advantages. These computers can run very large simulations much faster. This speed allows them to shorten the time to answer certain problems from months to hours. It will also enable them to tackle new questions that would have previously been impossible to do.

In addition, the ExaSky programs can simulate a huge range of scales, from the size of the smallest galaxies to a distance of less than a fifth of the way to the edge of the observable universe. That’s a range in scale from 1 to 10 million!

Exascale computers are also allowing scientists to develop new models that can describe processes that current simulations can’t include. For example, active galactic nuclei are areas in central cores of galaxies that give off radiation. They’re most likely caused by supermassive black holes. While these active galactic nuclei are millions of times more massive than our sun, the processes that form them are still on too small of a scale for current simulations to include. The ExaSky simulations will be able to include these phenomena using approximate models.

The biggest questions in cosmology and the biggest structures in the universe are hard for humans to wrap our heads around. Scientists using exascale computers to run simulations are providing insights into the past, present, and future of our universe.

Shannon Brescher Shea

Shannon Brescher Shea (shannon.shea@science.doe.gov) is the social media manager and senior writer/editor in the Office of Science’s Office of Communications and Public Affairs. She writes and curates content for the Office of Science’s Twitter and LinkedIn accounts as well as contributes to the Department of Energy’s overall social media accounts. In addition, she writes and edits feature stories covering the Office of Science’s discovery research and manages the Science Public Outreach Community (SPOC). Previously, she was a communications specialist in the Vehicle Technologies Office in the Office of Energy Efficiency and Renewable Energy. She began at the Energy Department in 2008 as a Presidential Management Fellow. In her free time, she enjoys bicycling, gardening, writing, volunteering, and parenting two awesome kids.