The Energy Department and National Cancer Institute are building tools that will improve the screening process for new cancer drugs.

May 6, 2019

Researchers explore scalable deep learning at a CANDLE workshop. | Photo by Argonne National Laboratory

DOE scientists, in partnership with researchers from the National Cancer Institute (NCI), are building artificial intelligence (AI) tools that will improve the screening process for new cancer drugs and help match patients to the best treatments available.

As discussed in a previous post, the partnership began in 2015 when DOE and NCI teamed up to launch the “Joint Design for Advanced Computing Solutions for Cancer (JDACS4C)” project which aims to develop next-generation supercomputing technology and accelerate cancer research. There are currently three pilots within JDACS4C, and this post focuses on the pilot that takes important steps toward developing AI that may individually match patients to the best possible cancer drugs. The vision of this pilot is ambitious: researchers aim to develop a single model that learns from many samples and many experiments to predict drug responses across cancer types as quickly and accurately as possible.

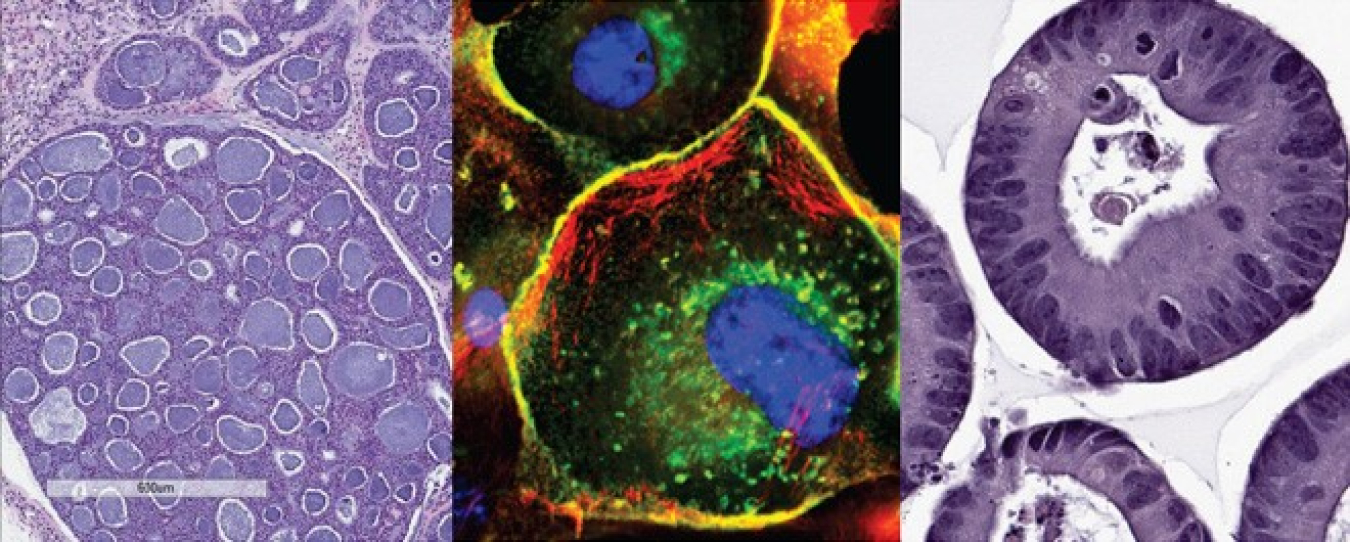

The project team is training AI on data from a large-scale repository of patient-derived tumor models developed by NCI, plus data on cell lines and screening results collected over the last 25 years. Having a wealth of well-characterized, clinically annotated, and quality controlled data to train on is important to capture the diversity of the patient/tumor population and make accurate predictions. A focus of the team’s early work has been in standardizing and validating the available data, much of which comes from multiple sources and the historical data are often unstructured.

Previous efforts in using AI for cancer have relied on well-defined, structured data sets describing single classes of tumors or relatively small classes of drugs. The scope for this pilot has been much larger, and encompasses complex data sets with a lot of variable features. Some of those features will be relevant to whether a drug will work or not, while some are redundant and have no predictive value. Major progress of the pilot team this year has been in training the AI on smaller subsets of data without losing predictive power. They’ve also developed techniques to link features identified in one type of data – say, cell-line data – with corresponding features in other types of data – for example, patient-derived tumor models or patient data.

The Energy Department and National Cancer Institute are teaming up in the fight against cancer. | Image courtesy of the NCI Patient Derived Models Repository (pdmr.cancer.gov)

Another challenge is that there are scenarios where clinical data may be sparse or noisy or both. In these situations, the AI predictions would be particularly valuable, but it can be hard to know that the predictions are accurate. To better understand how reliable the predictions are, the pilot team is working on developing new methods for uncertainty quantification (UQ). The new UQ methods will allow the AI to tell us where the predictions are very uncertain, indicating when and where more experimental and clinical data are necessary. That allows researchers to do further experiments and feed the new results back into the AI tools. This feedback loop helps optimize experimental design and technical development.

While AI models might be able to accurately predict a drug response, they can’t tell us why – they are not explanatory. The pilot team is pursuing an innovative hybrid approach that introduces mechanistic models into the AI framework. This helps researchers assess the results and helps identify new directions for improving our understanding of the underlying biology.

The pilot team’s advances in using AI on heterogeneous data sets, adding hybrid modeling, and developing UQ will enable an entirely new approach to preclinical screening of new cancer drugs that takes advantage of the wealth of data now available. Furthermore, the techniques developed by the pilot team are broadly applicable, and are sure to have a positive impact in many fields. In fact, many of the tools the pilots are developing will be made publicly available through CANDLE and are influencing DOE’s next-generation supercomputer development.

NCI published a post describing the pilot team’s work in detail. Watch here for more posts describing the goals and early achievements of the other JDACS4C pilot teams.

Andrea Peterson

Andrea Peterson is a AAAS Science & Technology Policy Fellow in the Office of High Energy Physics.

Michael Cooke

Michael Cooke is a senior technical advisor for the Office of the Deputy Director for Science Programs. He leads the coordination of efforts to develop and steward community open research data resources across the Office of Science.